Blockchain — new types of insider threat

Ninety percent of organizations feel vulnerable to insider attacks. The main enabling risk factors include too many users with excessive access privileges (37%), an increasing number of devices with access to sensitive data (36%), and the increasing complexity of information technology (35%). The report by Cybersecurity Insiders from 2018

Ninety percent of organizations feel vulnerable to insider attacks. The main enabling risk factors include too many users with excessive access privileges (37%), an increasing number of devices with access to sensitive data (36%), and the increasing complexity of information technology (35%). The report by Cybersecurity Insiders from 2018

Why attack “yourself”?

The insider threat is defined as a malicious threat to an organization that comes from people within the organization, such as employees, vendors or contractors, both actual and former (Wikipedia).

There are many reasons, from revenge to intentional employment, to cause damage to the company. The scale of attacks by current or former employees who still have access to some of the resources is large.

The aim of this article is to revisit well known insider threats from the blockchain applications perspective and, what is equally important, to shed light on the new insider threats of blockchain technology.

So sit back, relax and enjoy the journey.

Vicious insider

The classic and well-known insider threats affect any company or application, regardless of whether they use blockchain or not. Even the vectors and scenarios of the attack are very similar and the difference lies in the assets that are the target.

The threat can come from:

- malicious insiders like employees or contractors, who take advantage of their access to an organization’s intranet or buildings;

- negligent insiders, which come from human errors or ignoring security policies;

- infiltrators, who are external threats that obtain legitimate access to the internal resources.

The organizations must perform a security analysis of their internal infrastructure and applications. It includes both the IT and physical worlds. The next rule is that organizations must aim people, processes and technology at incident response. Security teams must monitor and analyze the network traffic to identify anomalous behavior.

Now let’s focus on blockchain

The blockchain technology is depicted as a holy grail to achieve the trustless and secure decentralized world where no one can control the whole system (do not worry, it is not Skynet yet). In fact, what the technology gives us is no more and no less, but a decentralized, cryptographically secure and trustless database.

This is a great attribute of the technology but still organizations and applications that employ it are no less vulnerable to many threats known from the non-blockchain world we all know. Furthermore, the technology introduces specific conditions and limitations, which are for example public access to the data (I am considering mainly the public blockchains because I am sceptical about the private ones) or hard to achieve randomness and timestamps, that increases the probability of a bug during the design and implementation of the blockchain-based system. The consequence is that the attack surface becomes bigger and new attack vectors appear.

Here comes the main character of this article — the insider threat. Blockchain does not defend from insider threat in any way. What is more, blockchain introduces new threats that can be classified as insider threats even though they do not exactly fit into the generally accepted definition.

Blockchain critical assets

The blockchain technology introduces specific assets classified as confidential, besides the well-known assets such as passwords, personal data, authentication codes, etc. The most critical assets are private keys which allows to operate blockchain database on behalf of its owner. You can think of your PGP private key, which when falling into the wrong hands allows someone to impersonate you until you revoke it.

There is a high security risk associated with private keys as they are used to call the transactions in the smart contracts (executing functions in programs stored in the blockchain) some of which are critical because they transfer cryptocurrencies and tokens worth millions of dollars. The set of possible operations depends on the type of data kept on the address corresponding to the private key. This can be compared to taking over the victim’s account.

The leak of private keys was the case of many cryptocurrency exchange hacks, including Mt.Gox hack in 2014 worth $460 million , Bitfinex hack in 2016 worth, Coincheck hack in 2018 worth over $500 million or Binance hack in 2019 worth $40 million. The victims have been attacked by means of social engineering like phishing and no additional security means were used to defend the cryptocurrency wallets assigned to the private keys.

However, in some cases the attackers, including specific insiders, can gain access to the critical operations (e.g. transfer) without the private keys. I am going to introduce the definition of the specific insider threat in the next section and further present a few scenarios of the attacks.

Blockchain-specific internal threats

The new type of insider threat comes from the actors who add new blocks to the chain. They are called miners or validators depending on the consensus protocol. From this point I am going to use the miner for simplicity, but it should be understood as any type of node that verifies and adds new blocks.

One of the main security features of blockchain comes from the assumption that the majority of those miners is trusted. Theoretically, 51% of them working together could attack the network and modify the “unmodifiable” data in blockchain.

However, in some cases, one miner is enough to perform an attack. His main advantage is that he can choose any transactions (from the pool of new transactions to be verified) to be included in new block. Moreover, he can add his own transactions to the pool.

Such process of transaction handling creates several interesting attack scenarios in blockchains that support smart contracts, because transactions execute some operations in these smart contracts. Even though the private keys are not leaked, the intruder is allowed to call the smart contract.

The only difficulty of such an attack is that the malicious miner must mine his malicious transaction before other miners include theirs. However, if he fails he can try again with the §next block.

Ok, it is time to check come potential scenarios.

Front-Running (aka Transaction-Ordering Dependence)

The first two scenarios use the front-running attack that benefits from the fact that the order of transactions themselves (e.g. within a block) is easily subject to manipulation and there is a transaction pool which is publicly available and contains the pending transactions before they are included in a block.

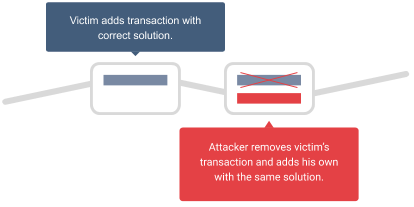

Scenario 1: Transaction takeover via race condition

The first scenario is a natural consequence of the front-running attack. Let us assume that there is some unknown solution for a problem that needs to be sent to a smart contract to get some prize. The malicious miner monitors transactions in the pool and searches for the one that is sent to that smart contract and contains the correct solution for a problem (eg. the smart contract game).

Then he creates his own transaction with the same data (solution) and includes it in the chain. The contract cannot know who was the first and gives the prize to the malicious attacker. He does not risk any cryptocurrency because if he manages to mine the block the transaction fee is returned to him. Otherwise, if he does not mine the block, he loses his chance to win the prize but no cryptocurrency.

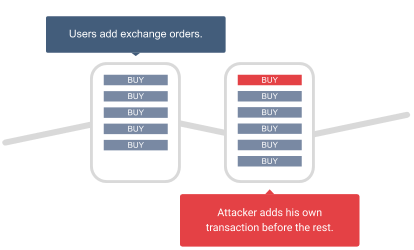

Scenario 2: Future prediction

Monitoring the transaction pool also brings information what operations will be executed before they are actually added to the blockchain. This information allows to perform some actions earlier, what is especially useful in case of such applications like cryptocurrency exchanges.

Imagine that you can see the trend on the exchange just before it happens. This is a great handicap for a trader. When a miner sees that many sell/buy orders are placed, he will add his sell/buy order as the first one in the block he mines.

The above-mentioned attacks can also be performed by other users, not necessarily miners, as they also can monitor the transaction pool. The difference is that they cannot mine new blocks so they cannot add their own transaction directly. However, such a scenario is available for them thanks to the gas limit and fee (price) of the transactions.

When a malicious user wants the miners to mine his transaction before the original one he can set the higher fee. Its value should be high enough for the miner to choose it as the first one and lower than the profit to be economically advantageous.

In this case the malicious attacker risks the fee value which he loses if the original transaction is mined as the first one, unless the smart contract rejects new submissions after it is solved.

Business logic abuse

Imagine a smart contract which allows to answer any question using crowd-sourced intelligence (the people will answer the question using blockchain technology). When you deploy the contract you specify:

- the question,

- the possible answers,

- the first person that will answer the question (call him reporter), and

- the time after which the answer must be given.

When the reporter answers the question, other people can open a dispute and together vote for the right answer.

Why would we need such smart contracts? It works as an oracle contract and allows to control the business logic of other contracts depending on the oracle’s answers. When you create a contract that will behave differently depending on the answer you just needs to assume that the crowd will answer correctly.

As all transaction (including a contract deployment and reporting the answer) cost some gas, the contract creator must deposit some value of cryptocurrency in case the designated reporter does not show up in the specified time. It is used to cover the gas cost of the other reporter that is allowed to substitute for the designated one. “This prevents the scenario where the first public reporter’s gas costs are too high for reporting to be profitable.”

The no-show gas bond (because that is how it is called) is set at twice the average gas cost for reporting during the previous period of time (predefined).

Basically, the required deposit is calculated depending on the gas price for those transactions that were used for reporting in the previous period of time.

A malicious user could manipulate the gas price of his reporting transaction to make the contract creation too expensive for other users. That would break the whole system because no new contracts would be created. Such a scenario is rather unlikely for typical malicious users, because they would have to send a reporting transaction with very high gas price and pay for it.

However, this is a completely different case when we take into account the malicious miner. The difference is that gas cost from all transactions in a block is transferred to the miner (his coinbase address to be exact).

The scenario is the following:

- The malicious miner creates a contract with himself as the designed reporter.

- The malicious miner creates a malicious reporting transaction with very high gas price.

- The malicious miner tries to mine a block that includes the malicious transaction.

- When the block is mined:

- The high cost of the malicious transaction is transferred back to the miner.

- The next legitimate creators must pay high deposits that are too expensive.

- No more contracts are deployed and the system is broken.

That was the exact case in Augur project submitted on HackerOne.

“By creating a market with themselves as designated reporter and setting a very high gas price for their own block at no cost to themselves, miners can manipulate the gas reporting bond.”

Mitigation

Some consensus protocols, like Proof of Authority, accept the described insider threat risk because the validators are trusted third parties. Such an approach is acceptable in private blockchain where it is easy to manage the nodes, control the chain and respond to a detected anomaly in the chain, including hard-fork in the past.

In case of public blockchains it is much harder to achieve such flexibility because the chain is constantly growing and implementation of a small change in big decentralized networks is a huge challenge (that has been proven by many hard-forks).

However, there are also some mitigations to be implemented in the public blockchains on the layer of smart contracts and independent form the consensus protocol (computationally expensive Proof of Work or less decentralized Proof of Stake).

Smart Contract Security Verification Standard (SCSVS)

First of all, I would recommend using SCSVS (https://securing.github.io/SCSVS/) as a security guideline to design and implement secure smart contracts. It is a standard in the form of the checklist that covers the whole SDLC process of smart contracts.

Among many other security aspects the SCSVS focuses on, it also covers the insider threat in the form of the miners/validators or contract creators.

The first category “V1: Architecture, design and threat modelling” allows to identify such a threat in the design phase with the threat modelling session.

1.1 Verify that the every introduced design change is preceded by an earlier threat modelling.

V2: Access control

2.2 Verify that new contracts with access to the audited contract adhere to the principle of minimum rights by default. Contracts should have a minimal or no permission until access to the new features is explicitly granted.

2.3 Verify that the creator of the contract complies with the rule of least privilege and his rights strictly follow the documentation.

2.4 Verify that the contract enforces the access control rules specified in a trusted contract, especially if the dApp client-side access control is present (as the client-side access control can be easily bypassed).

V8: Business logic

8.2 Verify that the business logic flows of smart contracts proceed in a sequential step order and it is not possible to skip any part of it or to do it in a different order than designed.

8.3 Verify that the contract has business limits and correctly enforces it.

8.6 Verify that the sensitive operations of contract do not depend on the block data (i.e. block hash, timestamp).

8.7 Verify that the contract uses mechanisms that mitigate transaction-ordering dependence (front-running) attacks (e.g. pre-commit scheme).

If you have identified a potential risk in a particular functionality you can either change its logic (e.g. restrict the rights of contract creator to the absolute minimum) or use a mechanism that mitigate the risk (e.g. the commit-reveal scheme described below for the functionalities that are vulnerable to front-running).

In case of the Augur project, the team decided to change the functionality and remove the gas reporting bond value that was calculated using the gas value which can be manipulated by the miner

Commit-reveal scheme (aka blind commitments)

The simple mitigation of the front-running attack is to use the commit-reveal scheme also known as blind commitments.

It divides the business process into two consecutive phases. For example, the process of sending some secret solution is divided into two consecutive transactions:

- the hashed value of secret solution (with nonce to mitigate replay attacks) is sent to the contract — this is the commit part,

- the real value and nonce is sent to the contract — and this is the reveal.

The second transaction can be sent only when the first transactions are not accepted anymore. When all transactions are confirmed, the contract verifies that the sender of both transactions is the same and the correctness of the values — nonce and its hash.

This scheme allows the sender to take a slot for his solution. The malicious miner cannot view it until it is revealed and cannot post his solution because he would have to send the first transaction which is not accepted during the reveal phase.

Conclusions

- The insider threat affects any company or application, regardless of whether they use blockchain or not. Moreover, the blockchain technology introduces new type of insider threat — the miners, the validators and contract creators. Even though in some cases, like private blockchains, the risk they introduce can be partially accepted, it should be carefully analyzed in case of public blockchains and smart contracts published on them.

- The most common risk that miners and validators bring, which is the front-running attack, comes from the fact that they can view the transactions before they are added to the blockchain and they can inject their own transaction.

- Other type of the risk associated with the above mentioned threat actors and also the contract creators appear when the contract’s business logic depends on the values that they can manipulate.

- All these risks introduced by insider threats can be mitigated; some by the generic solutions (e.g. commit-reveal scheme) and other by different solutions, specific for particular smart contracts (e.g. change in the business logic).

- Nevertheless, I recommend using SCSVS (https://securing.github.io/SCSVS/) as a security guideline to design and implement secure smart contracts.

The Smart Contract Security Verification Standard (SCSVS) is a FREE 13-part checklist created to standardize the security of smart contracts for developers, architects, security reviewers and vendors.

If you are interested in more general view on the security of blockchain applications, check out my other article.

Head of Blockchain Security